How We Govern Data at Scale

This story is also published on Medium.

Some fears and truths about data governance and automatic data capture

For some time now, there’s been a misconception that the best approach to maintaining a reliable, accurate, and trustworthy dataset is via manual tracking. While even proponents of manual tracking concede to autocapture’s superiority in ease of use and time to value, they often like to assert that autocapture will produce an ungovernable mess of undifferentiated data. We’d like to set the record straight.

When done right, automatic capture builds in data governance as a core architectural principle, not an activity that’s added on later. This is what we’ve done at Heap. This not only ensures that an autocaptured dataset can be properly managed, it actually produces a superior environment for data governance. Here’s why.

What is data governance?

First, some quick contextual information.

Data governance describes the collection of strategies an organization uses for collecting, managing, securing, and extracting value from data. Typical governance goals are accuracy (ensuring the data is fresh, reliable, and trustworthy), organization (ensuring that data is consistently structured, labeled, named, and stored to be easily discoverable) and security (ensuring that data handling complies with regulations, respects privacy, and minimizes the risk of leaks and unauthorized access.)

Now, some fears, myths, and truths.

MYTH: It’s impossible to keep large datasets organized.

TRUTH: Completeness is critical to good governance.

Completeness and good governance are both positive things on their own, but the most important thing to realize is that they are critically entwined. It’s where the chocolate meets the peanut butter.

After all, the whole point of collecting data for digital analytics is to generate meaningful insights that improve products, user experiences, and conversion rates. So the more data you have, the more you can do with it. Period. And to have a successful analytics initiative, you need two things. The right dataset, which is one that’s complete and ready for any question the team might ask of it. Then proper organization of your data (aka good governance) so each digital event is rigorously managed end-to-end.

It’s easy to assume that more data would be harder to govern. But let’s examine this idea.

Manual-tracking advocates claim that if your dataset is small enough, you can stay on top of it all. But for analytics purposes, there is now a copious amount of information that cannot be queried because it hasn’t been collected. Would it have been useful? Maybe really important? Maybe even revolutionary for your product? Who knows.

That’s a problem.

MYTH: Manual tracking makes data easier to govern.

TRUTH: Manually-tracked datasets take more work to govern than automatically-captured datasets do.

An equally significant problem is when the dataset is badly governed, without organization and precision. Now you can’t trust any data you DO have, so size is beside the point.

Here’s how governance occurs in a manual-tracking environment: teams create a “tracking plan”. This is a spreadsheet with all the events they plan to track, where they're located in the codebase, their names, current status, properties, etc. This spreadsheet is where all the governance happens—outside of the analytics tool—so now the platform isn’t the team’s source of truth.

We’re not hating on spreadsheets, they’re great, but the larger and more comprehensive they become, the harder they are to manage and keep reliable. It’s nearly impossible to avoid simple errors: outdated naming on a JIRA ticket, a typo when instrumenting code, or two similarly-named events. All minor items, but each discrepancy nudges the dataset closer and closer to chaos.

As you scale, spreadsheets are like monsters — they grow rapidly, give PMs exponentially more work to do, and create more potential points of failure for data completeness. Then — ironically — they become the places where your data accumulates errors, instead of keeping it all shipshape like you intended.

Let’s face it, managing a spreadsheet across teams is quite difficult to do. When multiple people have access, it’s challenging to enforce conventions and make sure that best practices are complied with. All it takes is one tired project manager to fat-finger some entries, and now the whole dataset becomes suspect. It’s far better to have a system that can keep everything organized within the platform.

MYTH: Manual tracking is precise because you know exactly what you’ve got.

TRUTH: Automatic data capture gives more precision, with way fewer limits!

Ok, in some sense you do know precisely what you’re getting with manual tracking — not much. Ultimately, arguments for governing a manually-tracked dataset end up being some version of “our method keeps the dataset small.” The idea is that by choosing a limited number of events to track, you can maintain consistency in naming and definition, and avoid problems like broken and outdated events.

That may be true, but the tradeoff is rather severe. We like to say, if there are only three books on your shelf, it’s pretty easy to keep them organized, and you’ll definitely know where all of them are. But you only have three books! That might not give you all the information you need, and it certainly doesn’t give you much reading variety.

To extend the metaphor, having automatic capture built into your governance is like having the Dewey Decimal System. Now you’re not limited to the amount of books you can visibly keep track of on a small shelf. So instead of three books, you can build a whole entire library. You have a way to quickly and efficiently find what you're looking for, as well as add as much new information as you please into your well-governed system.

Autocapture is built to seamlessly capture all interactions and behaviors from the time of initial installation onward. It simply requires a single Javascript snippet inserted into the header of a site or application. From then on, event activity is tracked automatically: every click, swipe, form fill, pageview, and more.

FEAR: Autocapture will produce mountains of unverified data.

TRUTH: When built right, autocapture systems verify data and keep it organized from the moment of definition.

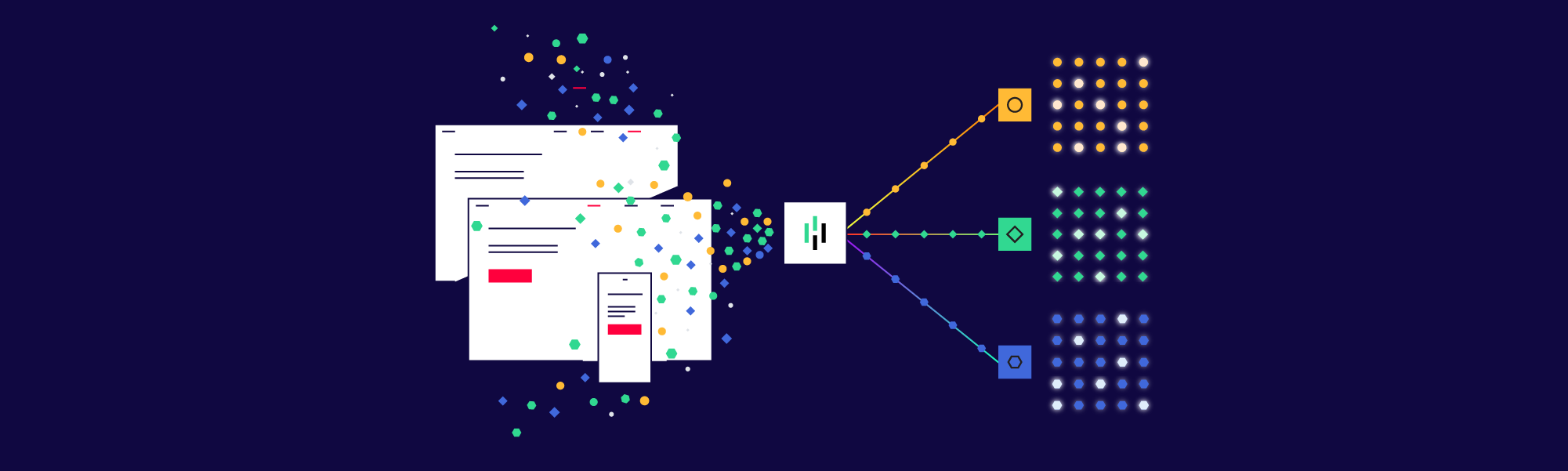

There is a smidgen of truth here. There is lots of unverified data, because that's how autocapture functions—at first. But it’s very important to distinguish event collection from event definition. So let’s go a little deeper into how virtualization works. This is where the magic happens.

Verification and governance matter once an event is defined — where it is named according to preset conventions, classified, and given validation. This definition process means that the moment an event enters your analysis environment, it's already fully organized. At the same time, autocapture preserves the raw data layer and keeps it intact. This separation is what lets you have all of the data with zero loss in organization.

The creation of that data ‘virtualization’ layer lets you construct a clean dataset for analysis, while maintaining all of your rich underlying data. Within your virtual layer, a set of built-in tools keeps everything organized, accurate, and verified.A data dictionary gives your whole team a single source for relevant data, including events, properties and user segments. It’s easy to archive old events while maintaining historical continuity. Plus you can set alerts for event inactivity, and permission levels to restrict access. No more spreadsheets being passed around and spreading out of control!Like we said earlier, best practices are built right into the architecture. Teams can harness all the data they need at every stage of an event’s lifecycle.So let’s review the principles of success for any analytics project:

Data-driven decisions require the right data.

Only a well-governed dataset can be reliable to answer critical questions

A complete dataset makes it possible to ask questions you wouldn’t have known to think of in advance.

When you are asking fresh questions, you get the gold: INSIGHTS. New, actionable information about your product and your users that was previously unavailable or invisible to you.

TRUTH: Autocapture is the way forward for data-driven teams.

Automatic data capture gives teams throughout your company an obvious advantage by providing a comprehensive, retroactive dataset, without any ongoing schema planning or manual implementation.At Heap, we believe that a complete and well-governed dataset is the key to answering the questions that the team knows to ask today — and discovering the unexpected insights that will lead to extraordinary digital products and experiences tomorrow.